Welcome to another weekend. I’m having my coffee, sitting on the couch, typing on my laptop, it’s 5am, my cats have all had their breakfast, and I’m going to knock out a bunch of links so I can close them. And I finished at 7:45am, so hold onto your seats. I made a real dent in my open tabs. Be prepared for substacks about sunk cost boy friends, a lot of artificial intelligence (the good, the bad and the ugly), a bunch of Guido Imbens’ articles from 2024, a bunch of economists’ estimating the effect of word of mouth on creative content sales like movies and books, the best new show on television since Coffin Flop on Corncob TV, howling huskies and exhausted Golden Retrievers, Bob Dylan and then some more AI.

Fertility, Marriage and Sunk Cost Boyfriends

I have a project on dating apps and “permanent dating” and one of my coauthors sent me this. The author reflects on her own experience at twenty-two in which she had wanted her boyfriend to propose after years of dating. She talks about the pressures and societal messages that discourage young women from seeking commitment and contrasts it with the reality that indefinite live-in relationships that are noncommittal often serve men’s interests while delaying women’s fertility and life plans. She critiques narratives that she had believed that suggested commitment (in her case marriage) was unimportant and argues that these ideas can inadvertently benefit men who are content to delay commitment, rather than empowering women who seek marriage and family. One of the phrases she uses that I had never heard before, too, was “sunk cost boyfriend”. That phrase really caught my attention. A sunk cost boyfriend is a man who after years of a relationship keeps delaying commitment which left her feeling stuck because she’d invested a tremendous amount of energy and time. It’s not so much he’s a sunk cost boyfriend, though, as it is she committed the sunk cost fallacy with her boyfriend. She wants to leave, but reasons that she’s already invested so much that she’s unwilling to leave. It is not surprising, though, that the one area where we are going to struggle with rational choice like “ignoring sunk costs” and focusing only on expected value (discounted utility net of expected costs, however you decide to do that) is the one area that is likely not rational in the first place and that’s love and romance. This is a perspective I have not heard before but which me and my coauthors have been more studying empirically which is that the permanent dating may be an equilibrium that is not desired even by the people in the equilibrium.

Strawberry Fields

You probably saw this, but OpenAI released its new large language model the other day named o1-preview, or what people have been calling Strawberry before that release. I really wish that name will stick, as I absolutely hate saying “o1-preview”. Maybe when we get the full o1 model we will be able to do that, but unless OpenAI calls it Strawberry, I doubt it. Anyway, the NYT talks about it. It’s an improved AI technology that is a big jump forward in “advanced reasoning tasks” in mathematics, coding and PhD level science coursework. It reasons through a kind of back and forth trial and error, sort of similar to how ChatGPT-4o will use python to try and figure out how to do technical tasks (because python will send an error if it fails, which somehow ChatGPT-4o responds to and tries a different approach). This new Strawberry model is an improvement even over that approach that ChatGPT-4o will sometimes undertake (it will if you ask it to, which I often will). Marginal Revolution gives a run down of responses. Here’s OpenAI’s announcement.

I’ve been playing around with it, but don’t have a good handle on when I want to use it. But I know it isn’t Cosmos because I asked it if it was Cosmos, and it said no, so I don’t think it uses the custom instructions or accesses the memories. I think maybe the way to think about ChatGPT-4o and Strawberry is that ChatGPT-4o is a Swiss army knife that does 24 things fairly well, but reasoning isn’t one of them. Strawberry does 1 thing better, and sometimes very well, which is reasoning, but not the other 24 things. So ChatGPT-4o is the more general chatbot and is excellent and conversational work, including prediction work actually as we show in our ElectionGPT and related projects, but Strawberry may be the one where you want to do more technical work like coding and math — and perhaps even solving problems in econometrics and theory. Won’t surprise me when someone makes a breakthrough using it, and that that person comes from a lower ranked program given the documented evidence that these large language models have larger effects on lower skill workers in the high skill distribution.

This is a Medium from this summer which is a pretty common point of view about large language models. I wonder why I had this open; it may have been because of OpenAI releasing Strawberry (aka o1-preview) the other day. I’ll get into that momentarily. The author is talking about AGI (Artificial General Intelligence) which he defines as an AI system capable of performing any intellectual task that a human can, with true understanding and creative problem-solving across a wide range of domains. The author critiques the hype surrounding AI, pointing out that large language models like GPT-4o are trained on static data and are unable to replicate the creative problem-solving and iterative decision-making processes necessary for true intelligence. He emphasizes that despite their impressive performance with tasks like generating boilerplate code, LLMs lack the ability to understand or navigate the complex, messy realities of real-world problem-solving. The post highlights that AGI will require more than just increasing data and computational power—it will demand deeper insights into the underlying complexities of intelligence, technology, and physics.

To be honest, I am sort of exhausted by these arguments. They’ve been coming out ever since ChagGPT-3.5, and even 4, where people just say “LLMs are stochastic parrots” and therefore say that they are really not valuable. It feels like people looking for a way to justify updating their substack with recycled content. But even without getting into this week’s Strawberry (or o1-preview, which doesn’t quite roll off the tongue like Strawberry), consider this metaphor.

In July 1965, Bob Dylan played at the Newport Folk Festival, opening with “Maggie’s Farm”. when he famously performed with an electric band. You can find a clip here.

To say people were upset would be an understatement. They screamed that he was a traitor and a Judas according to the article I just linked to. It outraged his folk music fans as they had already embraced Dylan as a lead figure in the acoustic folk movement — which, pause, Dylan is the man. Anyway, this is the metaphor I now hear every time I hear someone say demand that LLMs are flawed instruments because they aren’t AGI. That they are in other words flawed and limited, which in reality that is more of a reflection of their own lack of imagination projected onto the instrument itself. It’s not the instrument that has these limitations but themselves.

On that note, I revisited an ingenious method from spring 2023 that a psychologist devised to try and detect if large language models were actually reasoning or just engaging in counterfeit reasoning. I focused on this week’s Strawberry model and if you click through, you can see how it performed relative to GPT-3.5 and GPT-4 around 18 months ago. The TL;DR is that unlike then, and probably even in contradiction to the previous person’s substack, it at least appears to have a methodology that would be more like reasoning.

Sometimes I think that critics of AGI aren’t just saying that LLMs cannot reason, but rather are saying something that is almost like that they don’t have “souls” or don’t have consciousness and therefore cannot be considered truly intelligent. They don’t come out and literally say it that way, but it does seem like with each advancement, they just say the same thing — that these LLMs are not “intelligent” and they say it sometimes in a way that feels like they are begging the question (i.e., assuming the conclusion) and/or they just keep moving the goal posts in a way that puts them closer and closer to asserting some mysterious ontological claims about humans and consciousness.

This 80,000 Hours article from the other day, for instance, discusses the moral status of digital minds, raising concerns about whether future AI systems could be conscious or have moral status. But the author explores risks of both over- and under-attributing moral significance to AI systems, noting the potential scale of creating conscious digital minds. It emphasizes that humanity is unprepared to assess AI consciousness and calls for building a research field to address these questions before AI advances further. The issue is seen as highly neglected but potentially critical, and I am kind of just thinking these constant demands on what LLMs are not is really pushing the conversations away from figuring out what they are, and what exactly that alone implies for their role in our lives going forward. If you just constantly assert that these are not some thing that you’ve decided, then I think not only do we obscure their value, but it could possibly have some implication for our moral lives as it seems like it reflects a tendency to simply updating our moral lives in the face of new information.

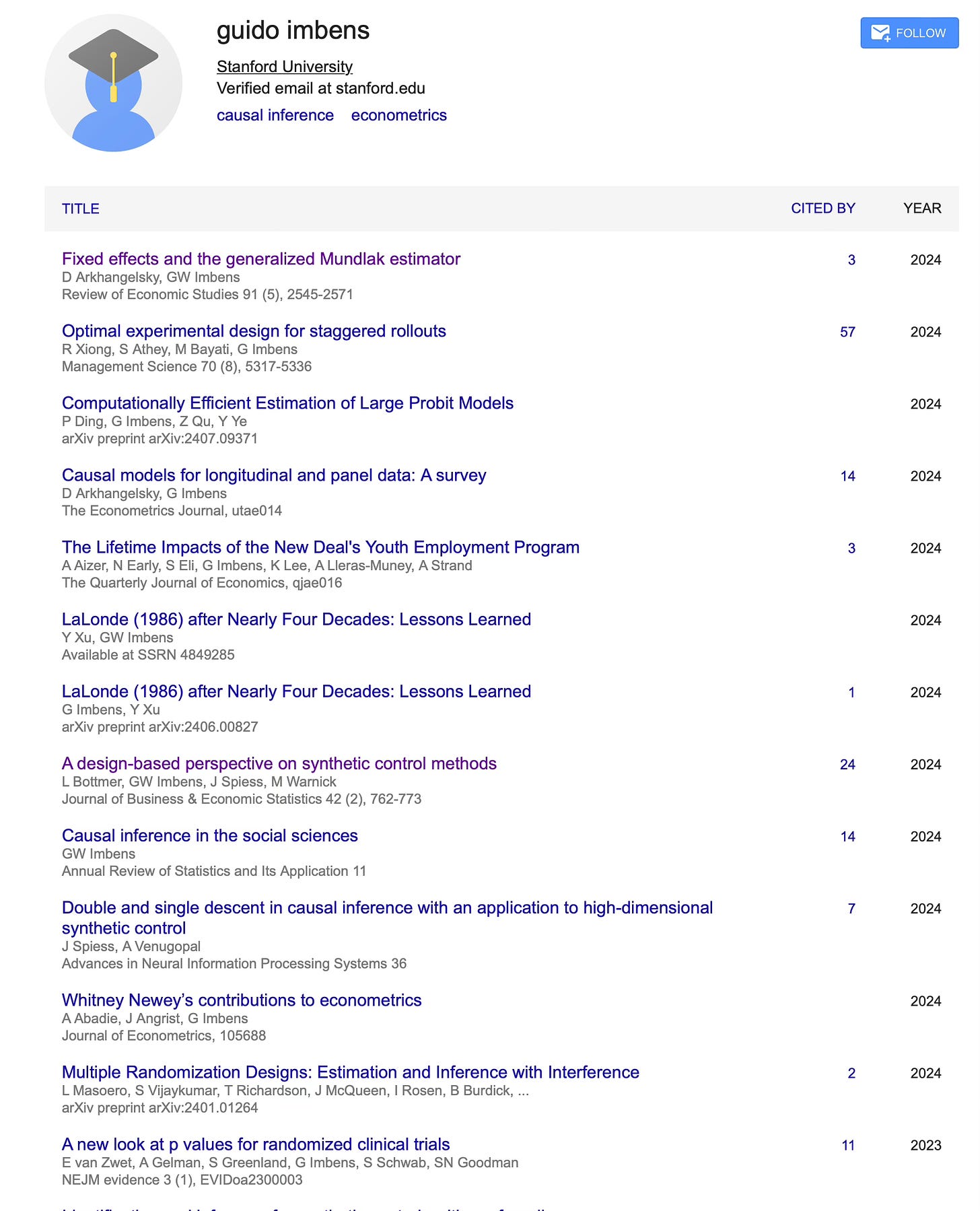

Guido Imbens’ Lost Publications from 2024

Here’s an oldie but a goodie, on the other hand. I haven’t seen this article in a while, but I was at once point while preparing the Mixtape way way back in the day reading it really closely. It’s an old 2016 working paper by Susan Athey and Guido Imbens (five years before he won the Nobel Prize) on the state of causal inference in applied work in economics and program evaluation. They explore three key areas: identification strategies in program evaluation (highlighting synthetic control methods and regression discontinuity), the use of supplementary analyses like placebo and sensitivity checks to strengthen credibility, and the application of machine learning techniques for adjusting differences between treatment and control groups and estimating heterogeneous treatment effects in high-dimensional settings. They offer practical recommendations for applied econometric work. Note they would go on to publish papers on synthetic control: synthetic difference-in-differences, matrix completion with nuclear norm regularization and design based approach to synthetic control.

Speaking of, Guido quit updating his website. I guess winning the Nobel Prize can do that. So, if you want to find out what he’s doing, I think you have to just use his google scholar page and resort by year.

So, don’t let Imbens’ online vita and webpage fool you — he did not stop writing papers in 2019. Google scholar lists 10 papers from 2024 alone. For instance, I didn’t even realize he had a new Restud with Arkangelsky — a coauthor on the synthetic control diff in diff paper — about the Mundlak estimator, which is something I had not heard about until Jeff Wooldridge’s imputation style diff in diff estimator. The Mundlak estimator helps control for unobserved group-level differences when analyzing data where people are grouped (e.g., by regions or companies). But instead of using fixed effects, this approach adjusts for these differences by including group averages of individual characteristics, making the analysis more flexible. Arkangelsky and Imbens’ new Restud shows how this method can be used to estimate the effects of a policy or treatment more accurately, helping researchers draw better conclusions in observational studies, where groups might differ in unmeasured ways.

And here’s something at Journal of Econometrics by Abadie, Angrist and Imbens on Whitney Newey’s contribution to econometrics. But I can’t quite figure out what it is as I don’t have JOE access at home. The DOI link says it’s an editorial.

And last one as I can’t make a substack devoted to Imbens’ 2024 vita, but here is his article at Annual Review of Statistics and Its Application for those who want to dig a bit more into what Imbens sees as having been done, its applications and open questions in causal inference in the social sciences. Might want to keep that one open a little longer…

It’s actually kind of more and more interesting to me how much of the applied and practical empiricism that we got from Princeton Industrial Relations Section fused with the way that causal inference unfolded on a note related to that. It was just so regularly focused on things like training programs — a topic I explored in a substack recently.

Who Brings Potential Outcomes Notation Into Economics?

David McKenzie’s World Bank blogpost discusses issues with joint orthogonality tests, commonly used to check balance between treatment and control groups in experiments. These tests, especially with many covariates and small sample sizes, can over-reject the null hypothesis, leading researchers to incorrectly conclude imbalance. He references a paper by Kerwin, Rostom, and Sterck, proposing randomization inference as a better alternative for such tests. Their research highlights that standard F-tests often falsely indicate imbalance, and their proposed method corrects this issue, ensuring accurate assessments of balance between study groups. McKenzie’s blogpost also advises limiting the number of covariates and handling missing data carefully to avoid inflated results. I have a primer on Fisher’s sharp null and randomization inference in my mixtape which you can find online here if you want to dig into this topic more.

A new study finds that trees lowers the risk of an ADHD diagnosis. Might need to be the guy in the seminar that raises their hand and suggests an instrument for trees in the next paper. I suspect that this paper is deep in the uncanny valley of causal inference.

Baylor Masters in Economics the Economics Profession and Skill Biased Technological Change

This week I learned that Baylor’s masters in economics is listed 28th in a list of top masters programs. Come study with us! I will treat the incoming 2025 cohort with a breakfast burrito from Sergio’s burrito, but I’m sure my colleagues will do something even better.

Speaking of economics, David Deming has an Atlantic article about economics as a profession being too concentrated at the top. He critiques the profession (probably more the profession than the actual field) and its insular nature and argues that prestigious prizes like the Nobel are concentrated among a small group of elite universities. The field has become overly focused on theory and status, often at the expense of real-world impact. While the shift toward empirical research is promising, it’s hindered by a lack of public funding, limiting access to only the wealthiest institutions. The author suggests increased funding, more public-focused research incentives, and prizes that reward practical contributions to society as solutions.

I love empirical work as much as the next guy, but I don’t think it holds a special place against theory and measurement, though. They’re all the pillars of science, and I’m not sure I see the field being overly focused on theory anyway — but I do definitely see it very focused on status, just like most of society.

Tracy Morgan from SNL and 30 Rock talks about the night he nearly died in a crash involving a Wal-mart had been driving for 24 hours without any sleep. The crash killed some people who had been on the bus with Morgan (who suffered major injuries).

In the long run, I hope such stories as less common after we will have fully adjusted to self driving trucks, but the adjustment costs for doing so are likely to be straining on workers for whom this has been a livelihood. Another example, I’m sure, of skill biased technological change, but perhaps “low skill biased technological change”, a term that Elizabeth Cascio termed when talking about fracking. Cascio was a Card student, and I interviewed her recently for my substack as part of my larger story about the “children and grandchildren of the revolution”. Can’t wait to post it.

Speaking of, I got back into 30 Rock recently and forgot about the 30 Rock scene where Tracy Morgan learns what the uncanny valley is, but here’s a piece about that scene.

Here is David Card and the late John DiNardo also talking about the skill biased technological change literature, which I predict will see a renaissance as the restructuring of the American economy around artificial intelligence continues.

Here’s Mankiw’s classic in new Keynesian macro on small menu costs and the business cycle. This was either his first or one of his first pubs after graduating from MIT.

Thomas Coleman, who wrote a very interesting history of John Snow’s “grand experiment” using diff-in-diff to study whether cholera was waterborne or airborne, also has a piece from 2019 on Friedman and Schwarz’s famous book on the Great Depression.

Itai Sher at UMass Amherst’s economics department has a forthcoming solo authored article in the American Economic Review. For those interested in welfare economics, and those interested in public finance and optimal tax policy, check it out. Congratulations to Itai!

Stories, Word of Mouth, the Black Market for Citations and Other Media

Speaking of actual controversies that I care about but which are also ridiculous distractions from reality, there’s a “civil war” among Star Wars fans. First, might want to take this phrasing down a notch; how about calling it a “clone war” among Star Wars fans? Anyway, it’s between fans of the Disney’s recently canceled show The Acolyte and the many YouTubers and others on social media who were, according to critics not merely expressing their opinions but were engaging in the kind of viral “cancel culture” thing we have come to accept as part of our social media saturated lives — only about a show. I seriously agree that they were successful in getting people to not watch the show, but hey — that’s life. The Acolyte was an experience good, meaning you had to watch it in the first place to know if you liked it, and bad reviews can cause you not to do so.

There’s actually a really wonderful paper by Reinstein and Snyder from 2005 titled “The Influence of Expert Reviews on Consumer Demand for Experience Goods: A Case Study of Movie Critics” in the Journal of Industrial Economics that tried to determine the causal impact of expert movie reviews on box office revenues. They exploited variations in the timing of movie releases (Siskel and Ebert specifically) and the publication of reviews to isolate the effect of critics’ opinions from other factors influencing demand and found that positive reviews significantly increase box office earnings, suggesting that expert opinions can sway consumer decisions. The study provides evidence that this effect is causal rather than merely correlational, and they used diff-in-diff for this.

Judy Chevalier and Dina Mayzlin have a 2006 article with nearly 10,000 cites I think in Journal of Marketing Research that studied the same question, but focused on book sales. They wanted to know how consumer reviews affected book sales and compared sales and reviews on Amazon and Barnes & Noble websites. And they also found that positive online reviews led to increased sales. Negative reviews had a smaller but still significant negative effect and conclude that online word-of-mouth has a causal influence on purchasing behavior.

When I was proposing to publishers that I would only publish my book, Causal Inference: the Mixtape, if they allowed a free online version to be published, I pointed them to this (then working paper, but now published in AEJ: Economic Policy) article by Abhishek Nagaraj and Imke Reimers titled “Digitization and the Market for Physical Works: Evidence from the Google Books Project”. It’s possible and understandable that free books could cannibalize book sales, but using the digitization of books at the Google Books Project, they find that making books available digitally increases demand for physical versions, particularly for lesser known authors (which was me). And I had evidence for that too, but never did take a screen shot as it all happened really fast. When I posted the book free online, it was before the book was available for sale on Amazon. Immediately, when we the free book went up, Amazon pre-orders shot up. I always had the feeling that the free book and the physical book went together, but only if the price of the free book could be kept low and was paperback, as it needed to be something you threw in your messenger bag, so those were also points I insisted on when we were shopping the book proposal around. And Yale University Press came in with an offer which included paying for the song lyrics as well as agreed to go with footnotes instead of endnotes. I’m team footnotes.

Listeners of my podcast know that I like to asks guests about their reaction to this old article by William Shockley, winner of the Nobel prize in physics for his work on the transistor, about his theory of highly cited scientists.

But I suspect that high volume citation phenomena is now a bit more opaque because of this emerging “black market for academic citations” — which I bet is partly driven by the returns to citations (which has always been there), improved tracking of one’s citations (i.e., google scholar) and the display of our h-index — something that I don’t think any of us knew of as a statistic ten years ago, but which people now are glad to put on their vitas. But they can be hacked, so deans and chairs and everyone else should be careful. One of the things I do appreciate about economics’ deeply hierarchical journal structure is that I think you can really only hack the h-index at the far, far left parts of the journal quality distribution. I doubt you can buy 50 cites in the QJE, in other words. Probably the growth in journal mills is where this is coming from, but I haven’t seen people really dig into this.

Well, when Spectrum dropped Corncob TV because of controversial show “Coffin Flop”, like you I was pretty upset.

But it looks like the theory of the second best is alive and well because HBO has ordered a new show from Tim Robinson and Zach Kanin, the brilliant team from Netflix’s I Think You Should Leave. Take that Spectrum! Looking forward more Tim Robinson and Zach Kanin in my life as I need to know what happens to all those naked dead bodies falling out of crappy wood at funerals. I tend to agree you don’t need the families’ permission because, like large language models, they ain’t got no souls!

Speaking of Corncob TV, $100 million deals for podcasts are not just for Joe Rogan. Someone call Kyle Krestchman, head of economics at Spotify, and see if we can’t get Coffin Flop on there. At $100 million, that’s a steal for such a cutting edge ahead of its time show!

The new Tim Robinson movie with Paul Rudd is also supposed to be “one of the funniest comedies of the year”, but you had me at “Tim Robinson”.

Here’s an article about Mark Zuckerberg being on a podcast.

My reservation wage for voluntarily watching this new Hulu show about Mormon social media influencers wives and their fake “scandal” on TikTok when several of them chose to share that they were also swingers is maybe about $20,000. I would need to be paid at least that to watch this garbage. I guess swinging and then openly sharing it is one way to monetize being TikTok famous. I watched the trailer and got through ten seconds before going back to the home page of my television.

Here’s a partial genealogy of Orley Ashenfelter on a website that the Industrial Relations Section put together for its 100th birthday. It’s partial because it’s user submitted, but it’s a good start.

This is Water and Fatigued Siblings of Howling Huskies

It may be time for you (and I) to relisten to David Foster Wallace’s “This is Water” commencement speech at Kenyon College from 2005. It’s one of my favorite speeches of my life time.

Do you ever wake up and in your best Jerry Seinfeld voice say “What’s the deal with conformal inference?!” Well, Matheus Facure has it for you. His book on causal inference, The Brave and the Bold, which that article links to, is great.

I have gotten hooked into huskies howling lately. Here’s a hilarious one of a Golden Retriever in the back seat of the family car when his two siblings, one on each side, begin howling. He looks so stressed out and so exhausted by their behavior. Been there.

Here’s a funny video. A guy came in for an interview, they got confused and thought he was there to talk about how the music industry had shifted to movie downloads in response to Apple’s presence in the music market. He is, to say the least, a little surprised that this job interview turned out like this.

Less Positive Developments in Artificial Intelligence

Now I’ll pivot a little more back to AI. I’m seeing more and more grants for projects that can credibly upskill workers with AI. Here’s one. I have another one somewhere, but I can’t seem to find it.

New study says that students are not learning from AI. I think this is the first time in my career where I am committed to figuring out how to pedagogically design classes so that they do, though. I think this is not an exogenous finding about AI, but rather an endogenous finding — meaning, just because it’s true 18 months after its release doesn’t mean it has to be that way. But if we let the Invisible Hand do it for us, I bet it will. It’ll take some pedagogical skill and experimentation and careful study to figure out how to leverage it. I’ve been bringing it into my history of thought class during the lectures to assist in explaining hard parts of sections we are reading, and I’m not sure it’s working so plan to stop for now.

Taylor Swift is always finding her on the cutting edge, but not always probably in a way that she had been looking forward to. This article discusses how Taylor Swift has become a prominent voice in the backlash against AI, particularly regarding deepfakes and digital impersonation. Celebrities, especially women like Swift, are increasingly vulnerable to AI-generated images and videos used in scams, hoaxes, and abusive content. Swift’s response to a recent AI-generated false endorsement demonstrates the growing tension around AI’s potential misuse and the struggle for individuals to protect their likeness and identity in the digital age. I’ll have to cover this in my spring class I’m developing on AI and the Market at Baylor.

Financial Times has an article that somehow isn’t behind a paywall. It’s about how AI-driven companies like Nvidia and Microsoft have surged in value, while the broader tech sector remains weak. Many tech companies, especially those not focused on AI, are still struggling to recover from the post-pandemic downturn and so this AI rally is kind of masking that broader weakness. But investors are noticing and are cautious, noting that enthusiasm for AI could be masking a cyclical downturn in traditional tech areas, such as software and IT consulting. Despite some signs of stabilization, the sector is still grappling with slow growth, overexpansion, and economic uncertainty. Here’s a WSJ article, which is unfortunately behind the paywall, on AI spending in charts. I love charts. I wish Corncob TV had picked up my pioneering show on charts in which people fall out of coffins while a speaker is giving a talk using only charts. But we already know that the mainstream media isn’t ready for pioneering shows like ours.

New Republic has an article about Ray Kurzweil’s prediction of the Singularity, where human and artificial intelligence will merge by 2045, enabling exponential advances in AI and technology. But it’s also about his career and the influence he’s had on AI development, which was what I enjoyed.

This Nicolas Cage horror movie is getting good reviews, but alas it’s on a streaming channel even more obscure than Corncob TV, so I doubt I’ll be seeing it any time soon. Sometimes late stage capitalism with its monopolistic competition of ten billion streaming apps is not that much fun.

I am not one of these who thinks that AI hype is a real thing, but if there is anywhere where I think there is AI hype, it’s “Apple Intelligence”. But it’s here, and will be rolling out slowly.

And that’s it! Got to now get other kinds of work done. Wish me luck!

LOL, I’ve been using sunk costs to convince my friends to break up with their bad boyfriends since I was an undergraduate in the mid-90s.