Strawberry Use Cases #1: Chain of Thought in Back-of-the-Envelope Math

The value of Strawberry showing its work

In this post, I want to share a use case for the new large language model released last week by OpenAI, codenamed “Strawberry,” or more technically, o1-preview. The model is an example of a method devised in 2022 called “Chain of Thought” which I’ll share below. But it’s a technique, as I understand it, that helps the AI language model with the complex reasoning task.

The purpose of this Substack is to highlight how this new reasoning capability can be applied to real research problems. So to make that concrete for readers, I thought what might be useful is if I shared a use case example I came up with that I think people would immediately recognize, which is the “back of the envelope calculation” so often found at the end of many applied papers. It’s a narrow enough application, but a general enough task we often do in communicating the policy relevance of our applied work, that I thought if I used Strawberry to undertake a “back of the envelope calculation”, it might really help turn the light bulb on for people who maybe just thought o1-preview was nothing more than a different kind of ChatGPT, when I think that isn’t the right way to look at it.

You see, ChatGPT-4o is excellent at conversational speech and generating answers when asked, but it doesn’t “show its work”, so to speak. That’s where Strawberry is different. Strawberry also generates an answer, but what makes it special is it shows its work. Rather than jumping straight to the final answer, Strawberry takes you through its own endogenous reasoning process, displaying for you the logic it chose, often making even the assumptions it used for those steps clearer, before it then moves into its own calculations. Its output is a clear description of the steps it used to arrive at an answer, and I think that is far more useful at helping researchers try to work through a problem than the answer itself. This makes it distinctively helpful for research, where understanding how you arrive at an answer can be just as important as the answer itself.

For researchers, the ability to see the reasoning—to watch a model break down a complex problem into smaller, digestible parts—can change how we approach certain tasks. It’s not just about automating output; it’s about offering transparency and a chance to engage critically with the process. That’s what this post is about: how Chain of Thought reasoning in Strawberry enables a more rigorous, insightful approach to back-of-the-envelope calculations, and why that makes it a powerful tool for research workflows.

Chain of Thought

When do you actually need a “reasoning task” done? And what is Strawberry doing exactly when it “reasons”? First, get it out of your mind up front that whatever it is doing is like what you or I are doing when we “reason”. It’s not reasoning so much as it’s following a “chain of thought” or CoT, which is actually a technical term. And that is probably the phrase you want to learn to use moreso than the verb “reasoning” otherwise you’re going to quickly find yourself in a circular philosophical debate about the nature of consciousness. Which, don’t get me wrong, I love that conversation as much as the next guy, but not when I’m trying to talk about something else.

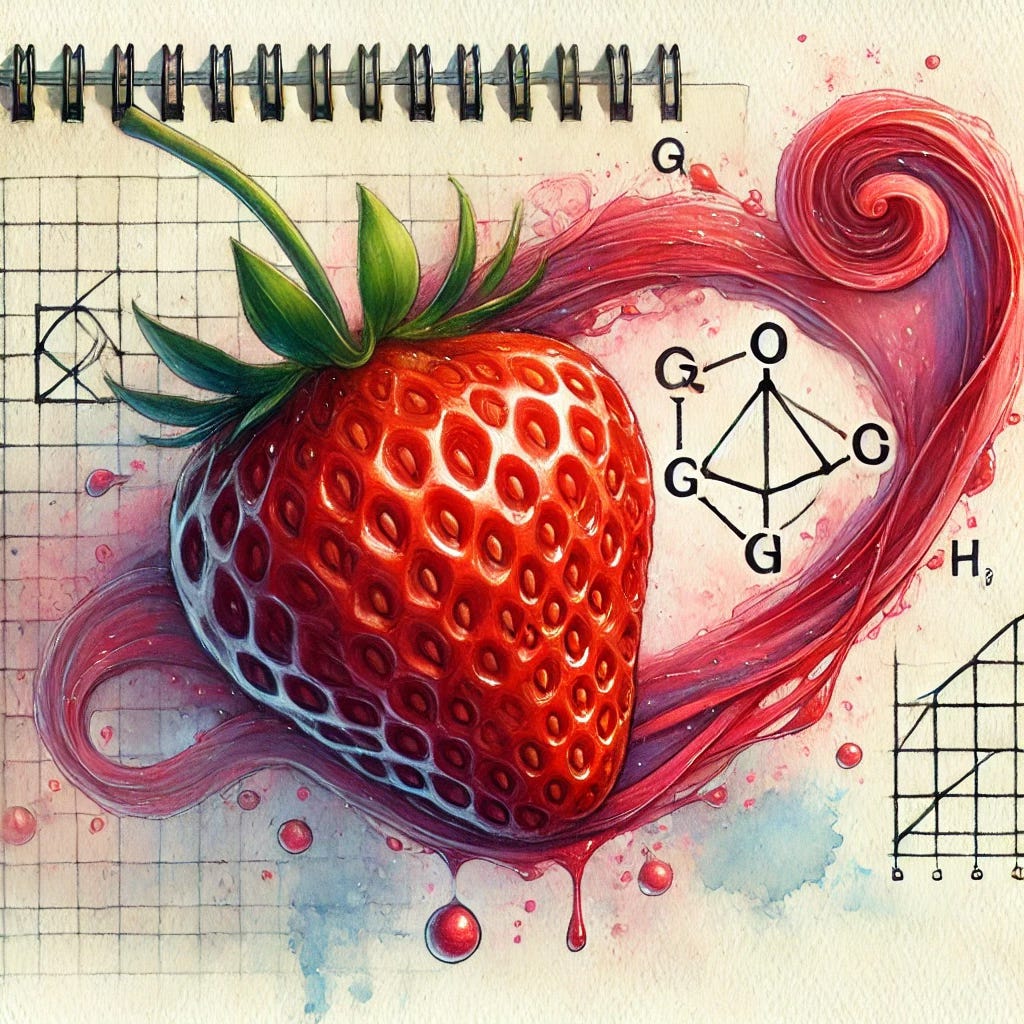

So a little background about this chain of thought thing. The concept of chain of thought in large language models is a fairly new development in AI modeling. It was introduced in this paper “Chain-of-Thought Prompting Elicits Reasoning in Large Language Models” by Jason Wei and coauthors. It was first uploaded to arXiv in January 2022, so it is on the one hand new, but at the pace at which these models are being trying and commercialized, also ancient history. It’s already got 7800 cites since it appeared a mere 2 1/2 years ago. This year alone, it’s been cited almost 4900 as of September 16th. Interestingly, the lead author, Jason Wei, is now at OpenAI.

So CoT is a reasoning technique developed by Wei, et al. (2022) where the LLM (e.g., Strawberry) solves a problem and lays out its reasoning to used to arrive at that solution. It uses a step-by-step process, though, instead of just the GPT-4o approach of going directly to an answer. And if all you hear is that difference — that Strawberry produces its reasons and its answer, but ChatGPT produces only its answer — then that may be a sufficiently useful simplification to distinguish between the two. You don’t always need the chain of thought when you’re wanting to have a conversation with a chatbot about something. But you might. And it’s on you (and me) to now try to better understand what precisely it is that you need and whether ChatGPT-4o is better suited for that or if Strawberry is. But if you need the reasoning, and not merely an answer, then Strawberry is what you want as it breaks down the task into a series of coherent intermediate steps which then follow one after the other.

Use Case of CoT in Interpreting Causal Estimates

Willingness to Pay To Take Off Masks in a Mask Mandate from Choice Experiment

Last night, I saw a new paper on the NBER website that used a choice experiment to estimate the costs of mask mandates during COVID. The paper is titled “Mask Mandate Costs” and is coauthored by Patrick Carlin, Shyam Raman, Kosali I. Simon, Ryan Sullivan & Coady Wing. Now note, I have not read this paper at all, and so the purpose of what I’m going to do is to show what I was able to do having only read the abstract and Strawberry — so in other words, the good, the bad and the ugly. But here goes.

In this paper, they asked people a series of questions designed to elicit their willingness to pay to more or less “take off their mask” during a mask mandate. They express it in a little bit of a more awkward way though than that — they present their estimates as WTP for exemption from a mask mandate. But that basically means “how much I would be willing to pay to remove my mask during a mandate?” They find this:

"The average person was willing to pay $525, and a small segment of the population (0.9%) stated they were willing to pay over $5,000 to be exempted from the mandate. Younger respondents stated higher willingness to pay to avoid the mandate than older respondents. Combining our results with standard measures of the value of a statistical life, we estimate that a 3 month masking order was perceived as cost effective through willingness-to-pay questions only if at least 13,333 lives were saved by the policy."So that’s their number, the price, so to speak, that they’re going to hang their hat on. They conclude that the WTP for the average person to take off a mask during the pandemic is $525 at the farthest part of the tail, it is high as $5,000. In words, it’s as though the average person during the pandemic goes to Walmart and says “And I’d like to now take off the mask” and the cashier says “Sure, that’ll be $525” and the average person “Whew. That’s exactly the amount of money I have left in my checking account. I’ll take it.” And then they “buy” it. They are willing to pay up to $525 to remove their mask during the pandemic, but not a penny more than that because if they paid $525.01 but they only valued it at $525, they’d be incurring a loss and rational people are not thought to do that.

That’s the logic and the interpretation is my point. It’s not relevant if you think it’s ridiculous — get a ticket if you want to get in line for the group that want to make fun of economists and their approaches to real world problems. But this is a commonly used choice experiment designed to elicit preferences and convert them into dollar amounts that can be used for reasoning about whether costly policies are worth pursuing, to estimate the damages associated with various things or the implicit value of amenities.

But that’s not my point. My point is what they do last. They write, “at least 13,333 lives would have to be saved by the policy” in order for mask mandates to pass a cost benefit threshold. This is called the “back of the envelope” calculation found in many applied studies. It’s usually the very last paragraph before the Conclusion section where someone takes their estimates, applies it to some out of sample data they collected, and then tries to give an alternative interpretation that is hopefully a bit easier to digest than an estimated causal effect itself.

Strawberry Uses CoT To Estimate Net Benefits of Mask Mandates

So, I decided that I wanted to know whether estimates of the effect of mask mandates on mortality pass the cost-benefit threshold implied by this 13,333 number in the authors paper. But to figure this out, I needed to try and give the instructions to Strawberry, and I did it in two steps. The first was to request treatment effect estimates from published papers. The second was to use that and the 13,333 number from the choice experiment to guesstimate whether the mandates were cost effective. So let me share that now.

Task 1: Find 5 Papers Using Quasi-Experimental Methods That Estimated Mask Mandate’s Effect on Mortality

Remember, you have to pull down o1-preview for the model from within your ChatGPT app or browser. You aren’t going to use, in other words, the ChatGPT-4o model (which is the default). You’re going to use the o1-preview which is just below it. Here then was my prompt. Note I did this because I read early on when o1-preview came out that it was incredibly good at finding references, even better than perplexity.ai. So I started out there.

What is the best five studies using quasi experimental methods that looked at effect of masking mandates on mortality rates? And please describe each one briefly, the date and the method and the finding.

You can find in there the five papers it found. I didn’t request links, but feel free to google them. The one paper, though, I immediately remembered was Victor Chernzokov and coauthors paper published in the Journal of Econometrics. A couple of these studies are findings a reductions in cases, but not mortality, but Victor finds an effect on weekly death growth rates by 2pp. The paper by Guy, et al. (2021) found a 1.9pp decrease in daily death growth rates 81-100 days after implementation. Others are on cases but not deaths, and I made a mental note of that.

Task 2: Determine if mask mandate legislation was cost-effective using estimated ATT of -2pp and 13,333 lives saved using choice experiment

Now notice I put in italics “weekly death growth rates” and “daily death growth rates”, both of which are covering a different time horizon. In order for the mask mandate to be cost-effective using the NBER working paper threshold, it has to be 13,333 lives saved up to 3 months after implementation so I had to figure out what I would do next. So here’s what I did.

Please use these studies to answer this question: this current study evaluated the cost of masking by using some kind of choice experiment. They give a threshold for whether the mask mandates were cost effective and say they had to save 13,333 lives. Read this abstract then using the aforementioned papers you listed findings which mostly express percentage point declines (2pp seems common finding) determine if the legislation was indeed cost effective.

Abstract: This paper presents the results from a hypothetical set of questions related to mask-wearing behavior and opinions that were asked of a nationally representative sample of over 4,000 participants in early 2022. Mask mandates were hotly debated in public discourse, and though much research exists on benefits of masks, there has been no research thus far on the distribution of perceived costs of compliance. As is common in economic research that aims to assess the value to society of non-market activities, we use survey valuation methods and ask how much participants would be willing to pay to be exempted from rules of mandatory community masking. The survey asks specifically about a 3 month exemption. We find that the majority of respondents (56%) are not willing to pay to be exempted from mandatory masking. However, the average person was willing to pay $525, and a small segment of the population (0.9%) stated they were willing to pay over $5,000 to be exempted from the mandate. Younger respondents stated higher willingness to pay to avoid the mandate than older respondents. Combining our results with standard measures of the value of a statistical life, we estimate that a 3 month masking order was perceived as cost effective through willingness-to-pay questions only if at least 13,333 lives were saved by the policy.Now let me show you the response. Note, there are two things in this response — one, Strawberry will give an answer. But that’s the main thing I want you to take note of. Strawberry is going to give an answer but it’s going to show the chain of steps used to arrive at that answer. Or to put it a different way, Strawberry is going to “show its work”, as we might say as educators. And that’s the thing I want you to see more than anything.

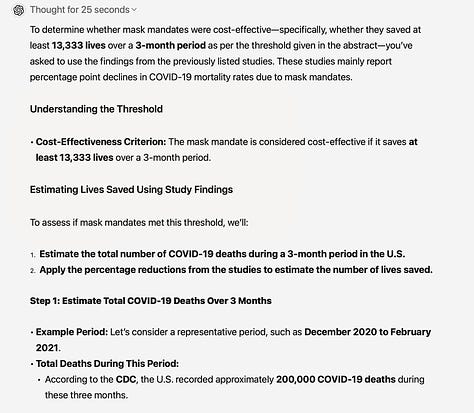

First, it restates the threshold which is that the mask mandate is considered cost effective if it saved 13,333 lives over a 3-month period.

But then in step two, it picks a 3-month period for its back of the envelope calculation. It chooses December 2020 to February 2021 in which there were 200,000 deaths from COVID-19 according to the CDC. Note, the point is not whether it is the right space of time — you would actually want to use the corresponding ATT estimates from those studies, which would mean using the post-treatment period and the treatment states and somehow back out the counterfactual mean level of COVID deaths for the 3-months after it was implemented. But see that is actually my point — it’s the explicit choice that is so helpful here because it allows you the ability to trace down the logic that this LLM used. And if you disagree, then you can go back and insert your own correction and ask it to repeat the analysis. But anyway, it used -2pp. But ideally you’d use a more complete meta-analysis of selected ATT estimates for this. I just wanted to see how far I could get using only my phone.

Third, it begins to do the calculation and the first thing it makes a note of is that the ATT estimates reported by Victor et al and Guy et al were expressed as decreased growth rates. One in days, the other in weeks. Strawberry notes, “Even small percentage point reductions can lead to substantial decreases in cumulative deaths due to the exponential nature of virus spread.” So, this step appears to be key to why it then chooses the fourth step.

In the fourth step, it lays out its assumptions and outlines the method it will use to answer the question.

Assume a baseline growth death rate (it chooses 10% weekly mortality growth rate without masks)

Apply the reduction from mask mandates. This is where the -2pp is going to come in. It’ll be an 8pp mortality growth rate with masks, and a 10pp mortality growth rate without them.

Estimate lives saved over 12 weeks (3 months).

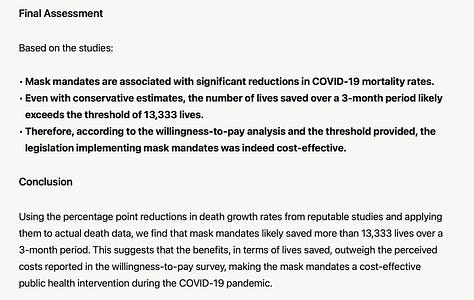

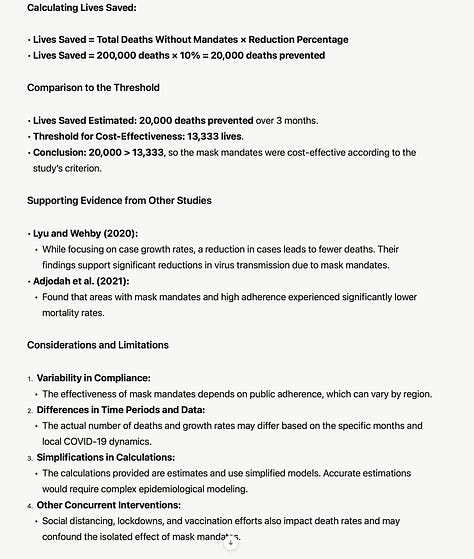

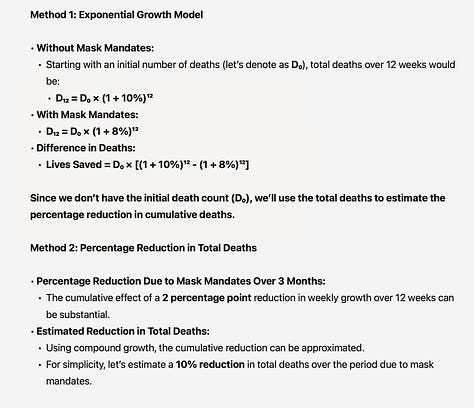

And then in the last step, after all that logical setup has been laid out, it proceeds. It chooses two separate methods for using that -2pp to calculate averted deaths in 3 month period. Method 1 it calls “Exponential Growth Model”. You can see it above. The second method it calls “Percentage Reduction in Total Deaths”. I’ll focus on that one. Here’s its reasoning from that point:

Method 2: Percentage Reduction in Total Deaths

Percentage Reduction Due to Mask Mandates Over 3 Months:

• The cumulative effect of a 2 percentage point reduction in weekly growth over 12 weeks can be substantial.

Estimated Reduction in Total Deaths:

• Using compound growth, the cumulative reduction can be approximated.

• For simplicity, let’s estimate a 10% reduction in total deaths over the period due to mask mandates.

Calculating Lives Saved:

• Lives Saved = Total Deaths Without Mandates × Reduction Percentage

• Lives Saved = 200,000 deaths × 10% = 20,000 deaths prevented

I don’t fully follow this to be honest. It’s not clear how it gets from 2pp to 10% reduction in total deaths, but that’s not really the point. The point is that I can see now that when at the end it estimates 20,000 deaths prevented off of Victor, et al and Guy, et al’s 2pp reduction in growth rates, I can see that it got there because of this move between 2pp and 10% multiplied off a counterfactual mean of 200,000 deaths.

And so then you see it concludes. If the threshold for cost-effectiveness is 13,333 lives saved and Victor, et al. and Guy, et al provide point estimates that are approximately equal to 20,000 lives saved given all those explicit assumptions and deductions, then mask mandates were cost-effective even acknowledging that the mean person’s WTP to take their mask off was $525.

Comments and Thoughts

The main value I see so far of o1-preview (Strawberry) is the explicit step-by-step reasoning (“Chain of Thought”) that it provides. That and it’s a great search engine for more relevant citations. ChatGPT-4o does not do this. It jumps directly to an answer and you really don’t know how that answer was arrived at except that inside it is a massive neural net trained on all the data in the world and it’s doing some kind of unknown word association. Well, Strawberry is also doing something like that. But the difference is that by giving me a list of steps, I at minimum can evaluate the logic, and not just the answer, and decide whether I agree or not.

For me, the exercise elucidated little details for me. For instance, the 200,000 number. Note that if we are using causal estimates, we cannot use as our baseline just any number. You cannot use for instance national death rates for December 2020 to February 2021 if estimates came from diff-in-diff (which Guy et al did, and I think probably Victor’s targeting the ATT too but I need to read that more closely). If you’re using causal estimates from an ATT estimate, then it’s literally going to be a summarizing of treatment effects for treatment states starting at t=0 over t=12 in weeks. You actually do not know the number from observation to use in that calculation, but it isn’t 200,000 and that is because the ATT is a reduction from some counterfactual value. So you’re going to need to figure that out in an intermediate step, probably not going the route that Strawberry went. You might go in reverse starting with the post-treatment observed mortality and then back out using that 2pp what it would’ve been had the law not passed, and turn that into a number of deaths.

But see that’s my exact point. My exact point is the CoT reasoning provided allows you get a flowchart immediately of assumptions and path taken. It’s a map of the logic used by Strawberry to arrive at 20,000 lives saved. The value of Strawberry is not so much the answer as the chain of logic used to get at that answer, and if you disagree with its logic, it’s almost certainly helpful to have it so that you can use that logic to find the things you disagree with and then insert your own alternative set of assumptions and set of paths.

And if you did do that, you know what you could do? Just tell Strawberry that you aren’t so sure about step 3. Tell it that Victor, et al. and Guy et al seem to be using ATT and if so, then the correct “baseline” wouldn’t be the national average. A better would be the baseline deaths in the treatment states for a month prior to treatment, but even that is not exactly what is implied by the ATT estimate. You could just lay out to it precisely what you’re thinking and tell it this:

“do it again, but here’s my reasoning about why I don’t like your approach, respectfully. I think that it is not the correct application of the ATT since the ATT is measuring a change from a counterfactual baseline, and that counterfactual baseline is technically something we could calculate. But if we did, then probably we would be getting directly at the number we need. So do me a favor: use the states that passed masking mandates, and do it two ways. Use the baseline (pre treatment) mortality as the number to multiply by 10% or whatever. And then in a second method, more directly convert -2pp into an estimated number of lives. Just be sure to provide for me the steps and the assumptions you used as that was very helpful.”Anyway, here’s my prediction. When Strawberry came out, I was trying to get it to set up consumer theory problems — an objective function expressed with utility functions, resource constraints, first order conditions, comparative statics. I was curious if it could do that. No luck so far with what I was trying. But then I saw this paper and tried what I tried, and that’s when it dawned on me — these back of the envelope calculations, as they’re called in applied work, are the “last paragraph before the conclusion” of every applied micro paper that ever bothers to do it. Well, I expect that we will probably get more back of the envelope calculations, and likely better ones, that are using CoT to get there. That’s got to be the lowest hanging fruit area of economic research where this would be helpful. So often we just have our coauthors to do this, and it would be nice to have another set of eyes and ears to think through this. And this is also the sort of thing you can imagine giving an RA to do — double checking the calculations multiple ways, using the CoT to help the RA better understand this critical thinking series of steps.

But I still worry despite my optimism about the effect this will have on learning. All of this is reducing the time inputs needed to arrive at an answer. But what if time inputs are needed to arrive at knowledge? What if the way humans learn is by using time intensively, through repeated failures, and that only through repeatedly failing with intensive time use does a person experience discrete jumps in insight and understanding? Then it does make me wonder if we now have technology that will accomplish a learning task at lower time use and costly cognition. Question is what model is correct for thinking about learning?

Currently doing precisely this for a project. Thanks for the writeup esp. the examples.