Learning Continuous Diff-in-Diff with Claude Code: Deriving the TWFE Weights (Part 1)

Claude Code 40

We are going to be, in this series, going slow. The purpose will be two things. One, learn the continuous diff-in-diff paper by Callaway, Goodman-Bacon and Sant’Anna (CBS), conditionally accepted at AER. Two, I am going to take a stab at building something that I can use again — a package. Brantly Callaway already has an R package, so we will see what it is we can pull off, but my sense is that if we can make the package do some things that is not already in that package (like calculate the TWFE weights, include covariates, or who knows what else), then it’ll help me because I think I need to personally make something if I am going to master this paper. And I’m looking, too, for some exercises that I think will help me deepen my skills with Claude Code, and trying to sketch out a package seems like a good one. As this package is for myself, it’s beta only, and my goal is just for us to first and foremost learn the paper, and then see if we can use Claude Code to help me achieve that goal.

But today is going to be basic. Our goal is going to only be creating the architecture for our stored local directory using a variety of new /skills. Specifically, we will be using the /beautiful_deck skill, the /split-pdf skill, the /tikz skill and the /referee2 skill. As not all of these skills have been discussed before on here (but which I use a lot), I explain what they each do, and provide the prompts as well. We will be creating a beautiful deck of the first step involved in the CBS decomposition of the TWFE coefficient in instances with continuous doses.

Thanks again for supporting this substack, as well as my book, Causal Inference: The Mixtape, my workshops at Mixtape Sessions with Kyle Butts and others, and my podcast. Helping people gain skills and access to applied econometric tools through a variety of creative efforts is sort of my passion. It’s a labor of love. If you aren’t a paying subscriber, consider becoming one today! I keep the price as low as Substack lets me at $5/month and $50 for an annual subscription (and $250 for founding members). The Claude Code stuff will for a while continue to be free at its launch, though that may change in the future since after 6 months, there are so many resources. Mine will continue to focus on practical research purposes or what I call “AI Agents for Research Workers”. Thank you!

My Bacon Decomposition Conjecture

I have a theory. My theory is that no one actually wanted to learn the new diff-in-diff estimators (under differential timing) until Andrew Goodman-Bacon’s paper, ultimately published in the 2021 Journal of Econometrics, showed in a very clear way that the vanilla TWFE specification was biased. It was biased even with parallel trends.

My theory is that writ large, most applied people don’t care about new estimators until they can be convincingly shown that there is something wrong with the estimator that they already use, and if they can also be shown that it is problematic even with the assumptions they thought were sufficient. And so when Bacon’s paper came out showing that TWFE (thought then to actually be a synonym for difference-in-differences, not an estimator) was biased, it really shook people.

Now you can disagree with my theory, but that’s my working hypothesis, and I’m using it to motivate this series. And here is my conjecture. I don’t think people really, deep down, want to learn this new continuous diff-in-diff paper. I think many people are on the other side of the diff-in-diff Laffer curve. They want to see less diff-in-diff stuff; not more. And the only way that they will voluntarily make a person choose to learn another diff-in-diff estimator is if you can help them understand that the estimator of choice — TWFE — is biased. Otherwise, we have bills to pay, mouths to feed, miles to run, and classes to teach.

Backwards engineering and the TWFE coefficient

So, the plan then to help do that is to study the TWFE decomposition that Callaway, Goodman-Bacon and Sant’Anna derive. I asked Bacon by text the other day could I just call this paper CBS because CGBS does not roll off the tongue. He said I could, because everyone calls him Bacon anyway, so I’m calling it CBS. Let’s get started then.

So Pedro Sant’Anna presented yesterday at Harvard and he noted that there are two ways that econometricians have approached causal inference. The first is what he calls “backwards engineering”. Backwards engineering is where you more or less run a regression, and using some tool like Frisch-Waugh-Lovell crack open the regression coefficient and figure what causal estimand, if any, you just calculated. Sometimes the weights are so weird and poorly behaved that you didn’t at all. And that’s backwards engineering.

Pedro prefers the second approach which he calls “forwards engineering”. And forward engineering is where you state the causal estimand you are interested in, you note the assumptions that you think are realistic in your data, you do a particular calculation that when you invoke those assumptions the calculation is that population estimand — or rather the mean of the sampling distribution is.

Well, we are going to do both of these, but today we are going to do backwards engineering. And we’re doing it first because of what I said in above — I think people need to be taught what the coefficient they love means first, and if they don’t like hearing what it is, then they may actually be willing to sacrifice their time to listen to a new one.

But we want to use Claude Code to help us here, so let’s start with the regression first of all.

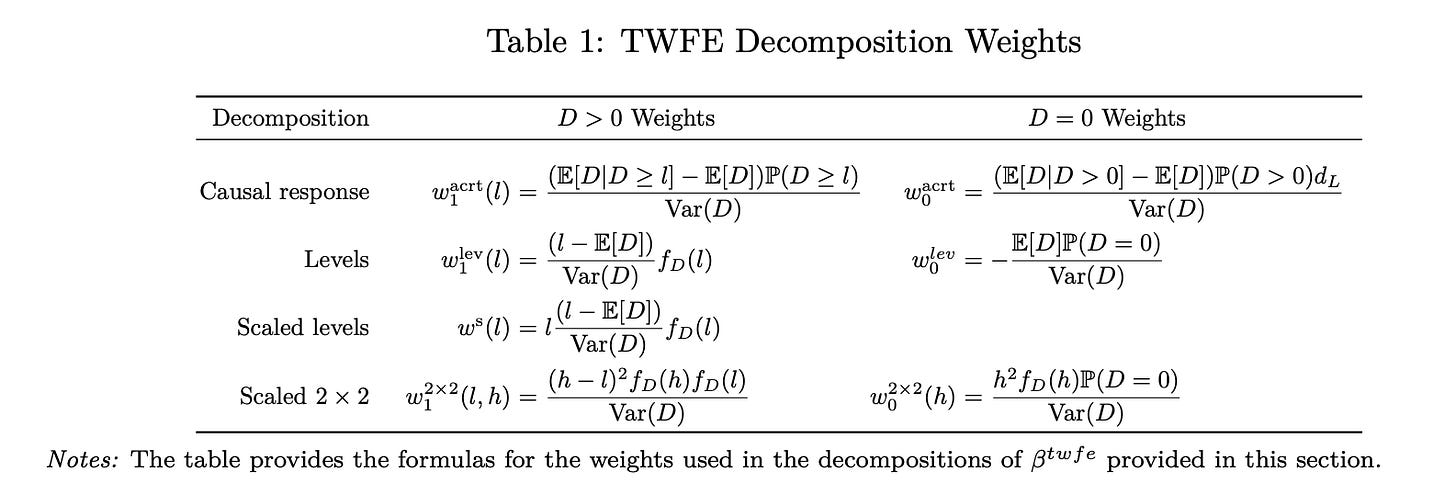

And then we will note from the paper what the beta means. Here are the CBS weights.

Bleh, right? That’s gnarly looking. So this is where Claude Code is going to help us. What we are going to do is literally write a package together that when invoked will actually calculate these weights.

But to do it, we’re going to need an application. Kyle Butts developed an application for our CodeChella so I’m going to use it. It’s by “Trade Liberalization and Markup Dispersion: Evidence from China’s WTO Accession” by Yi Lu and Lingui Yu, 2015 American Economic Journal: Applied Economics. Here’s what the paper is about.

Lu and Yu’s paper is about China’s 2001 WTO accession. This forced industries with pre-WTO tariffs above 10% to cut them to the ceiling. Lu and Yu use the size of that predicted tariff cut as a continuous “dose” to estimate, via an industry-level DiD on 3-digit SIC panels from 1998–2005, whether trade liberalization reduced within-industry dispersion of firm markups (measured by the Theil index and four other dispersion statistics). And their answer was in the affirmative. Industries hit with larger mandated tariff cuts saw larger declines in markup dispersion, which they interpret as trade liberalization reducing resource misallocation. So that will be our application.

/split-pdf the papers

So the first thing that I did was read the paper a lot. That’s over several years, but I encourage you to read the paper. The paper follows the teams’ philosophy of forwards engineering, but Table 1 gets into backwards engineering the TWFE coefficient like I said, and while I understand why you forwards engineer, I think for learning purposes when the world uses one estimator already, I think it’s actually more pedagogically useful to backwards engineer. So we will. But first, we will use my tool /split-pdf and this prompt:

Please use /newproject in here, move the pdf in this folder already into the readings or articles folder, then use /split-pdf on it. Write a summary of each split in markdown. And then write a summary of the whole thing. Pay careful attention to precisely how to calculate the twfe weights in table 1, also. That’s what we are going to focus on ourselves.

So, what I had done was I created a new empty folder, I put the paper in there, and then I had Claude Code split the paper into smaller pdfs, then write markdown summaries of each one, and then once it was done with that, write a markdown summary of all the markdowns. Then we will do the same for the China-WTO article. Here was my prompt again using /split-pdf.

Use /split-pdf on Lu-Yu, make summaries of each split in markdown, then create one big summary markdown of those smaller splits. Make the goal to deeply understand their TWFE estimation strategy, and a deep understanding of dose and outcomes in addition to whatever you ordinarily do.

I copied all of these things now to my website so you can see them, both the manuscripts and both markdown summaries from /split-pdf. Here they are.

Callaway, Goodman-Bacon & Sant'Anna v4 — "Difference-in-Differences with a Continuous Treatment"

https://www.scunning.com/files/CGBS_v4.pdf

Lu & Yu (2015), AEJ: Applied — “Trade Liberalization and Markup Dispersion”

https://www.scunning.com/files/lu_yu_2015_markup_dispersion.pdfCBS markdown summary from /split-pdf: https://www.scunning.com/files/cbs_continuous_did_summary.md

Lu-Yu (AEJ 2015) markdown summary from /split-pdf: https://www.scunning.com/files/overall_aej.md

Explaining the TWFE decomposition with a deck

And now I’m going to conclude. What we are now going to do is make a “beautiful deck” using my rhetoric of decks essay to guide us, have my /referee2 skill to critique its overall organization, and then a new skill I created recently, /tikz, to sweep through and fix any Tikz related compile errors. All of these can be found at my MixtapeTools repo, and you can either clone it locally if you want, or you can just have Claude Code read it on your side (or Codex — whatever). Let me briefly explain what each does.

Rhetoric of decks essay and /beautiful_deck skill

The rhetoric of decks essay is something I’ve worked on here and there. But essentially, it’s based on the premise that decks have their own rhetoric, Claude has been trained more or less on every deck every created (as well as every other piece of writing), and that the tacit knowledge involved in making good, bad, mediocre and great decks can and has been extracted by the large language model. And the rhetoric theory is in the classical sense according to Aristotle’s three principles of rhetoric which are as follows:

Ethos (credibility): The speaker’s authority and trustworthiness, earned through demonstrated expertise and honest acknowledgment of uncertainty. In decks, it shows up as methodology diagrams, citations, and openly naming the alternatives you considered which signal you’ve done the work.

Pathos (emotion): Appeal to the audience’s feelings, values, and aspirations including what they fear, hope for, or recognize from experience. In decks, it means opening with a problem the audience feels, validating their frustrations, and showing what success looks like, though pathos without logos collapses into demagoguery.

Logos (logic): Reasoned argument grounded in evidence, structure, and acknowledgment of counterarguments. Again, in decks, it appears as data visualizations, comparison tables, and a clear flow from problem to solution, but logos without pathos is just a lecture that produces disengagement.

And for this exercise, I finally got around to turning my rhetoric of decks essay into a skill called /beautiful_deck. You can call it now, and if you want to read more about it, just go here to my /skills directory. The default is it will create a beautiful deck in LaTeX’s beamer package following my explanations of what I’m going for in decks — data visualization and quantification, beautiful slides, beautiful tables, beautiful figures, not a wall of words, sentences or equations, minimizing cognitive density of slides, max one idea per slide (two tops), using Tikz for graphics and/or .png from software packages like R, python, Stata, intuition, narrative, and finally technically rigorous exposition. But I’ve made it so you can also indicate you want it in a different format like Quarto, markdown, etc. Your call. One of the things that /beautiful_deck does is it also checks for compile errors from overfull, box, etc., and makes an effort to eliminate them, no matter how cosmetic they are.

Skill: https://github.com/scunning1975/MixtapeTools/blob/main/.claude/skills/beautiful_deck/SKILL.md

Human-readable docs: https://github.com/scunning1975/MixtapeTools/tree/main/skills/beautiful_deck

Updated skills index: https://github.com/scunning1975/MixtapeTools/tree/main/skills

Updated presentations README: https://github.com/scunning1975/MixtapeTools/tree/main/presentations

Updated top-level README: https://github.com/scunning1975/MixtapeTools

/Tikz skill

As we are going to make a beautiful deck in beamer using the /beautiful_deck skill, it will be by default using Tikz, the powerful and graphics package with nearly impenetrable and very complicated (to me anyway) LaTeX syntax. You can find an example of it here.

Well, the default in the /beautiful_deck skill is to check for all compile errors where the content of the slide is basically spilling off the slide’s margins. This is when words go below the end of the slide and become unreadable, for instance.

But not all visual compile problems are caught within the /beautiful_deck skill itself. For instance, the labels on graphics routinely, when purely automated, will interfere with other objects. Words, for instance, will be interfering with boxes or lines. Things will cross one another. And this is probably related to the fact that large language models have poor spatial reasoning.

So, the /tikz skill is used to verify that the location of words, equations, and other labels are not blocks or crossing other objects. One of the things /tikz uses to correct for this in the decks is that it uses Bézier curves which are parametric curves defined by control points, and my /tikz skill uses depth formulas on them to detect and fix arrow/label collisions so that they don’t happen.

It’s not perfect. But, it can help. And I strongly encourage you to check your decks closely, and if you still see things you don’t like, get rid of them. In this day and age, we should not tolerate any cosmetic errors like the location of labels. Our goal is henceforth to make beautiful decks and beautiful decks have beautiful pictures, and beautiful decks do not have mistakes.

Skill: https://github.com/scunning1975/MixtapeTools/blob/main/.claude/skills/tikz/SKILL.md

Human-readable docs: https://github.com/scunning1975/MixtapeTools/tree/main/skills/tikz

Formula reference (shared with

/compiledeck): https://github.com/scunning1975/MixtapeTools/blob/main/.claude/skills/compiledeck/tikz_rules.md

/referee2 skill

And the last skill is my /referee2 skill which writes referee reports critiquing code and decks. /referee2 is my adversarial audit skill with two modes: a code mode that performs a full five-audit cross-language replication protocol on empirical pipelines, and a deck mode that reviews Beamer presentations for rhetorical quality, visual cleanliness, and compile hygiene against the Rhetoric of Decks principles.

In deck mode it systematically checks every slide for titles-as-assertions, one-idea-per-slide, no wall of sentences, minimized cognitive density and balance across the deck, correct TikZ coordinate placement (using the same measurement rules as /tikz), and zero Overfull/Underfull/vbox/hbox warnings in the compile log. When complete, it files a formal slide-by-slide report with accept / minor-revision / major-revision verdicts. It is meant to run in a fresh terminal by a Claude instance that has never seen the deck, so the review is structurally independent from whoever built it. It also is used to audit code, though. For now, it is not really set up for paper review though I’m sure you can use it for that if you wanted (and I have).

Skill: https://github.com/scunning1975/MixtapeTools/blob/main/.claude/skills/referee2/SKILL.md

Canonical persona / full protocol: https://github.com/scunning1975/MixtapeTools/blob/main/personas/referee2.md

Human-readable docs: https://github.com/scunning1975/MixtapeTools/tree/main/skills/referee2

Beautiful deck request

And now we are ready. We are going to ask Claude Code to make a beautiful deck that explains to use the TWFE decomposition in CBS. The goal is to take a person who is unfamiliar with their decomposition from knowing absolutely zero to absolutely something.

But our goal is extremely narrow. Since TWFE always constructs its coefficients using this formula, which is numerically the same as the sum of squared residuals formula which estimates the parameters a different way, we will not be focused on their causality interpretation just yet. Our goal is only to understand the algebra of the decomposition divorced from causal inference and diff-in-diff entirely. So here it goes. It’s long, but that’s mainly user error. I tend to over explain things to Claude, which admittedly uses a lot of tokens, so feel free to change this if you want.

Please make a beautiful deck using the rhetoric decks essay and /beautiful_deck of the CBS paper we summarized. Use the markdowns only. And you will apply it to the AEJ WTO paper that we also marked down. But today’s goal in this deck is extremely narrow. I want you to focus on the TWFE estimation, with a continuous dose in -diff-in-diff, showing the equation, and its historic interpretation. Explain the context in which it is used in the AEJ paper. Then I want you to ONLY focus on the algebraic decomposition of the formula that is in Table 1 of the CBS paper. There are several decompsoitions associated with several different things so slowly take us through each one but do so using the rhetoric of decks applied toa. group of people who are needing to go slowly and who find the notation confusing at first glance. So you should be heavy on the application first, the intuition second, always within narrative and Aristotle’s rhetoric principles, the actual data application (so produce R code or python code or Stata code that does this in replicable code), .tex files from that analysis, heavy on Tikz graphics and quantification, use .png, and so on. I want the audience to go from knowing zero about the CBS decomposition to understanding it at the end. But no causal inference. Only algebraic calculation of the weighting schemes involved, and the interpretation of each input using shading, highlights, little brackets beneath equations, building block graphics, and so on. Our goal in this is PURELY to understand the weighting invlved in TWFE under these various measurements contained in Table 1 only. And remember-- beauty first!

After it ran, I asked it to then use /tikz to fix labeling, etc., and then /referee2 to critique it. And once those were done, I told Claude to do everything /referee2 said to do. This is not instantaneous, and it’ll spend about 20k to 25k tokens most likely all said and done. So not cheap, but at the same time, but at the same time, it wouldn’t get you on the Meta leader board which was a contest they ran to see who could use the most tokens. The winner essentially cost the company over a million dollars over 30 days by using something like 1 billion tokens.

Almost certainly there is a more efficient workflow and I’m going to spend time one day trying to see what I can do to do that, but you get the gist. If you tips, though, please leave them on ways you might improve, and I will review them and have a conversation with my Claude to see if I should do it. Here is the final product.

I am going to, for today, just post what I have though I suspect I will tinker more with the deck later. I figure seeing the initial output, though, is probably okay for the substack, as who knows — maybe the initial will land. Though I tend to need things consistently reframed in a way that fits how my brain works, and I typically go round and round with Claude on deck production until it’s exactly how I like it. So I may have to do that after I start reading it.

CBS TWFE Decomposition Deck: https://www.scunning.com/files/cbs_table1_weights.pdf

Concluding remarks

That’s it for today. This was a lot. In the next entry, we will start unpacking the content from the deck but in the meantime, I encourage you to experiment with all of this on your end. That way you can get caught up in your own time. When we return, we will start trying to decipher the decomposition formula. You are encouraged to read the paper closely, but we will be narrowly focused on just the decomposition formula of the TWFE estimator in the continuous dose diff-in-diff case.

Thanks again for reading. This substack is a labor of love, and for the time being, I am going to continue to make the explicitly Claude Code posts initially free so that all readers can learn a bit how I use it. But I do encourage you to share this substack with others, particularly applied researchers wanting to learn Claude Code in the context of learning something they are mainly interested in — applied statistics, causal inference, data science, program evaluation and econometrics for their own research purposes. And if you aren’t already a paying subscriber, consider becoming one!

The output of the /split-pdf skill looks very different here than the sample on your github as well as what the skill describes.

Is this purely due to the different prompt asking for the individual .md for each split etc, or do you have a modified version of the skill, too, that isn't on your github yet?