Closing tabs: Tuesday edition

Game of thrones, AI, Madeline Pryor and a little love

I woke up at 2am so like any rational person grabbed my phone rather than try to fight back to sleep and instead try to empty around 70 or so open links off my phone, several of which were about our blizzard in Boston yesterday. Thanks again everyone for your support! If you enjoy this substack, consider becoming a paying subscriber! It’s only $5/month which is the lowest price point substack allows.

If you haven’t seen Chris Cornwell’s discussion of using Claude Code and Cowork for professional work as a social scientist, you should. There’s things in here not commonly discussed such as the relative merits of Cowork, plus collaborating with coauthors and students using it, and many other unique things I’ve not seen. It’s also a slick design.

Boris Cherny encourages teams to shrink at the intensive margin (meaning fewer people) to let Claude Code pick up the difference. That’s the partial equilibrium — the general equilibrium when all fixed costs are variable costs is a whole other readjustment.

A Knight of the Seven Kingdoms finished its short but excellent first season the other day. I highly recommend it. It’s a fresh contribution to the GoT material by HBO. It also confirms a theory. I used to be so obsessed about the various theories but now I don’t really care. I did and do love his character though.

The reforming of the original X-men as the new X-Factor is an emotional center for me in my own personal story because that is when I transitioned from collecting Archie Andrews, Transformers and GI Joe comic books to mutants based comics. It’s the last few years of living in Brookhaven when X-Factor #1 came out. In it, they discover that Jean Grey is still alive. I was 11 years old and would spend hours and hours sitting below a comic book rack at a pharmacy down the street from my house reading stacks of comics and the feelings I felt discovering X-men to this day fill me with emotions I don’t think I feel or have felt anywhere else. The story of Madeline Pryor has therefore alway been special to me, but also random that it would. They retconned her into being a Jean Grey clone and gradually she became evil during the Inferno crossover story. Apparently they’re doing something with her again. I don’t read comics anymore nor can I easily get through super hero stuff anymore but I will always be protective of those memories.

Boston got either a blizzard or a Nor’easter depending on which website I read. Here’s two videos I filmed of me out yesterday in it walking to lunch to meet a friend for pizza. Absolutely divine. I felt like a kid.

It even broke a record! we got 32 inches of snow.

Harvard classes were remote yesterday and so I taught about the conditional expectation function and a tiny bit about sampling online. Here’s my slides.

More people predicting the demise of dating apps. There was also a thing about it on the daily.

Is AI bearish or bullish for the market? Maybe it’s so bullish it’s bearish? I already can never remember which animal means which thing so I’m probably now ruined with this idea that can mean the same thing if extreme enough.

AI, machine learning, banking and finance and why “mo models, mo problems”.

A new Ryan Murphy show about JFK Jr and Carolyn Bessette. Only reason I’d want to watch this is because it would fill in some of the tabloid related holes in my human capital from this relationship which I for some reason followed closely-ish as a kid. I was most of my life very interested in Hollywood and for reasons I’ll never really understand, that interest tended to being the Kennedy family into my purview of interest. It may have been because of the movie JFK by Oliver Stone, but it seemed like more generally, Hollywood was always interested in the Kennedy even before then. Could’ve been the Marilyn Monroe connection, who I also was pretty interested in learning more about. Anyway, I remember JFK Jr dying, crashing his plane into the ocean, with his fiance and her sister. I had loved he, like his sister, seemed to be more interested in words than politics.

Teen cannabis use and psychosis. Are they related? Is that sample selection bias? Is it causal? One thing is for certain — people who work in law enforcement and mental health inpatient facilities seem to treat the link as causal and such an obvious one that to even question it, you sound like someone denying the earth is round. That’s the one thing that has always struck me — that the link is accepted as fact and unquestionable by those professionals at the intersection of law enforcement and mental health inpatient facilities.

Claude Code is an electron app. Also Boris still replies to questions at the hacker news.

Bill Gates told Microsoft CEO Satya Nadella that betting a billion on OpenAI was not going to be profitable. Now they have a nearly 30% stake in the company, worth $135 billion, and after a reworked deal that lets OpenAI get away from exclusively using Azure gets them 20% of their annual revenue into the mid-30s.

Two people went on a date and a journalist asked them how it went. If AI eliminates this kind of pop journalism job, I won’t cry.

Jason Fletcher is doing a series of his thoughts on using Claude Code, and AI more generally, for empirical research. Here he says that AI didn’t make research faster — it just moved the bottleneck. The fixed cost of starting a project is now nearly zero, so your list of promising ideas explodes, but you still only have the same number of hours to actually finish papers. Add in the new cost of verifying AI output, and you’re more congested than before, just at a different stage. Jason’s fix: use RAs not to review AI code but to independently replicate what the AI already found, so you never assign a dead-end project again and your verification problem solves itself.

Speaking of Jason Fletcher, here’s two new papers at NBER on NAFTA and mortality. One by Jason and his coauthor, Hamid Noghanibehambari, and another by Amy Finkelstein and coauthors.

Wikipedia black listed a blogger who runs a popular site that lets you access gated material for free. The blogger began inserting things into the link to cause a denial of service attack against someone who had outed his identity, among other things.

Five couples who have been married for 20+ years share stories about survivor bias. I mean about what makes marriages last.

Stepping back from providing emotional labor in relationships can reveal some of the craggy rocks and weird sealife hidden by the tide.

This article about AI went viral and some say it spooked markets. See earlier post above (linked again here).

People seem to like the third season of Night Agent. I couldn’t finish season two despite loving season one. And I can’t seem to get through one episode of the new season. I mainly watch it for the face the main actor playing the Night Agent makes. He also seems like a sweet guy, almost like a big kid.

Predictions by Nick Bloom and a dozen other coauthors about firm demand for labor and AI, productivity and other things about AI.

A new AEJ: Macro uses a custom built text-based measure of Fed policy stance from a trained language model on staff-drafted dovish/hawkish alternative FOMC statements, then decompose each statement into expected versus surprise components using high-frequency financial data. The payoff is a framework that can run counterfactuals — showing how different Fed communication choices would have moved markets. I may try to run the analysis through OpenAI like I did with that PNAS and see whether zero shot is much different (see part 5 in that 5-part series I did).

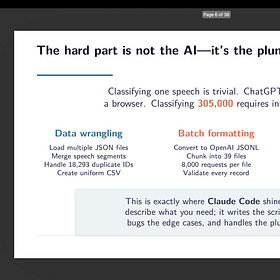

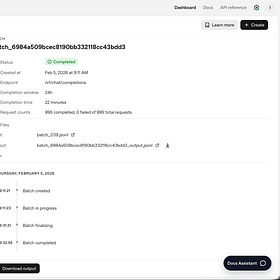

Claude Code 22: Final Entry Into Classification of Speeches with Claude Code and OpenAI gpt-4o-mini (Part 5)

Today is the fifth and last entry into my replication of the Card, et al. PNAS paper. You can find the others here in order from 1 to 4:

Important trends in the gender gap among professionals.

Interesting sounding new paper on blood donation by Evan Rosenman and coauthors. Using a discontinuity in hemoglobin eligibility thresholds for blood donation, they find that deferring donors reduces their future volunteerism — but the catch is that medical staff manipulate reported hemoglobin levels around the threshold, which invalidates standard RD designs. To handle it, they develop a partial identification approach that produces valid bounds even when the running variable is manipulated, with broader applicability to other RD settings facing the same problem.

MacArthur Foundation is putting $10 million into Humanity AI, a coalition of ten major foundations (Ford, Mellon, Mozilla, Omidyar, and others) committing $500 million over five years to ensure AI is shaped by people rather than just Silicon Valley — funding researchers, journalists, and policy organizations working on AI governance across democracy, education, labor, and the humanities.

A popularly demanded use of LLMs by academics is the lit review. But LLMs can’t reliably attribute ideas to their original sources — they favor famous, highly-cited authors and replicate existing citation biases — so letting them handle attribution would disproportionately erase underrepresented scholars whose work is already undercited. The authors reject the “collaborative human-machine authorship” solution and instead insist researchers must remain fully accountable for every claim, manually tracing ideas back to their original authors.

Interesting critique of AI at a substack I found. AI isn’t just a tool that helps you work faster — it’s a “meta-temptation” that quietly removes the conditions under which real thinking happens (something I’ve warned about too on here). By outsourcing deliberation (summarizing the paper instead of reading it, drafting the email before you’ve decided what you think), you gradually erode the very faculty you’d need to recognize you’re doing it, so the rationalizations (“I’ll review it anyway,” “the ideas are mine”) feel reasonable even as the boundary between trivial and meaningful tasks blurs beyond recognition.

But point counterpoint: these authors say science should be machine readable and propose something to make it more easily extractable by AI.

But after you read that, read this, and ask yourself the hard questions about the isoquants’ shape around expert work from machine versus human time going forward.

Researchers built a 322-question benchmark of expert-level, “Google-proof” virology lab troubleshooting problems — and top AI models like OpenAI’s o3 score 43.8%, outperforming 94% of PhD virologists on their own specialties, while the human experts average only 22%. The alarming implication is that publicly available AI already functions as an expert virologist, which raises urgent biosecurity governance questions about dual-use misuse.

As Claude Code advances across the global economy, and automation causes a shift in the interception of aggregate production, not everyone can participate due to proprietary data, data use agreements, and privacy. Until more licenses and protections are afforded researchers and agencies, maybe a bandaid solution is to get a machine off the grid with its own primitive version of Claude on it.

Fed researchers at the Federal Reserve Board apply NLP and sentiment analysis to 55 years of Beige Book reports and find that the anecdotal text actually carries meaningful signal — even after controlling for lagged GDP and other standard metrics, Beige Book sentiment outperforms the yield spread in nowcasting GDP growth and forecasting recessions. Topic modeling adds further value by revealing the shifting narratives driving economic conditions across different historical periods.

Again this reminds me of the relevance of my little five part substack series I did over the last month using gpt-4o-mini and one shot batches to classify 300,000 speeches for only $11 and 2 hours. Here’s part 1:

Claude Code Part 14: I Asked Claude to Replicate a PNAS Paper Using OpenAI's Batch API. Here's What Happened (Part 1)

I’ve been experimenting with Claude Code for months now, using it for everything from writing lecture slides to debugging R scripts to managing my chaotic academic life. But most of those tasks, if I’m being honest, are things I could do myself. They’re just faster with Claude.

Has Claude Code and other AI agents like it shifted the economics of AI from GPU-intensive compute to local CPU-intensive compute, and if so, are we about to see an increase in demand for more CPU, and more advances there?

Some tea about the future of A Knight of the Seven Kingdoms.

And Bad Bunny’s Super Bowl show sent one of his songs to the top of the charts.

And with that, I’ve officially gotten my browser tabs down to only 3 links, one of which is a Google search result of queso recipes that I can neither post nor delete. Have a great day, stay warm, play in the snow!

Thanks Scott for another excellent curation. Do you think the Microsoft acquisition of OpenAI is trying to remove the competition, an element of sunken cost fallacy, or something else altogether?