Closing tabs: Saturday Morning Edition

Lots of AI and love, plus statistics and the best cordless vacuum cleaners

Here are several links I need to get off my chest as they are burning a hole in my browser tabs. Lots of love in today’s posts, as well as AI, but I don’t think as much love towards AI itself, though maybe. Thanks again for your support of the substack! For only $5/month, you too can get a gajillion posts a week about love and AI!

Love comes in numbers

The 777 rule: every 7 days you have a date. Every 7 weeks you do a weekend away. And every 7 months you go somewhere sort of for a big getaway, maybe for 7 days? I think that was it. Anyway, I could get behind that kind of rule.

And here are 8 ways to bring warmth back into your relationship. One of them I was glad to see — take responsibility for your own unhappiness. I heard someone say though that no one can cause our happiness but they can cause our unhappiness, which may be why the 8th thing in that list is to accept when it may be time to exit.

Six things couples can do to cultivate a thriving relationship. Number six is cultivating friendships outside of the relationship — which is something I see constantly in these lists. For instance it shows up in this list of five things too.

People who love people learn to accept 4 things about them.

White chocolate highlights.

Michael Pollan has a new book coming out about consciousness. Here’s a NYT interview with him. There’s also something in Nature covering it too.

Interesting sounding memoir by the former CEO of Sony (now chairman of Snap). He recounts the worst mistake of his career which was to green light the Seth Rogen and James Franco movie where they hatch a scheme to assassinate the leader of North Korea. That led to a major hack where nearly all of Sonys servers were destroyed, scripts, emails, movies, health records, social security numbers — basically everything they could grab — was released online. The book sounds like it’s of him processing it and his whole life.

Peter Hull contributing to IO.

What academics can learn from comics and performers.

Here’s a guide for beginners wanting to improve their coffee bean buying game.

Stephanie Eckman on the use of synthetic data in social science (a deck).

Tabaré Capitán, an economist, on his experience writing his first python package.

Claude code starter kits for researchers

Here’s a couple of brand new Claude code starter kits for researchers, both by coauthors and friends. First, Jared Black, has written a really great piece on workflow for AI-Assisted research that some of you may find useful.

And Christopher Cornwell at the University of Georgia has a manual for empiricists (economists, data scientists, everyone) on Claude Code that also dives into the relative usefulness of Code over Cowork, which is the first starter pack I’ve seen anywhere that is diving into that.

The best cordless stick vacuums from Wirecutter.

Back in BBS days from the late 80s, ASCII was king. Here is a deep dive into ASCII graphics.

Connect Claude or ChatGPT directly to Indias official government statistics.

Harvard physicists use a chatbot as a coauthor on a paper bc of a major discovery made together. New times.

The history of the Kaplan-Meier survival curve.

Comparing Codex and Claude Code, and when one seemed to be stronger at what kinds of tasks and why.

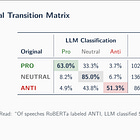

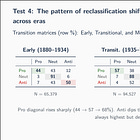

Some of you may have been following my video series where I have been sending 300,000 Congressional speeches and presidential communications from 1880 to now. This wasn’t exactly a replication — more like I wanted to show how to use Claude Code to submit texts to OpenAI via batch requests (to get the 50% discount), get it back within hours and spending only $11, and have it all analyzed. I just was trying to illustrate another use case for Claude Code in other words. But what happened was that I was genuinely surprised to find that gpt-4o-mini had reclassified around 100,000 of the 300,000 speeches that the original authors had done with their own RoBERTA approach (which used around 7 students who annotated 7500 speeches and then predicted the rest), and yet the reclassification aside yielded the same results. And then I spent even more substacks trying to nail down as to why that was, and so the series sort of branched off.

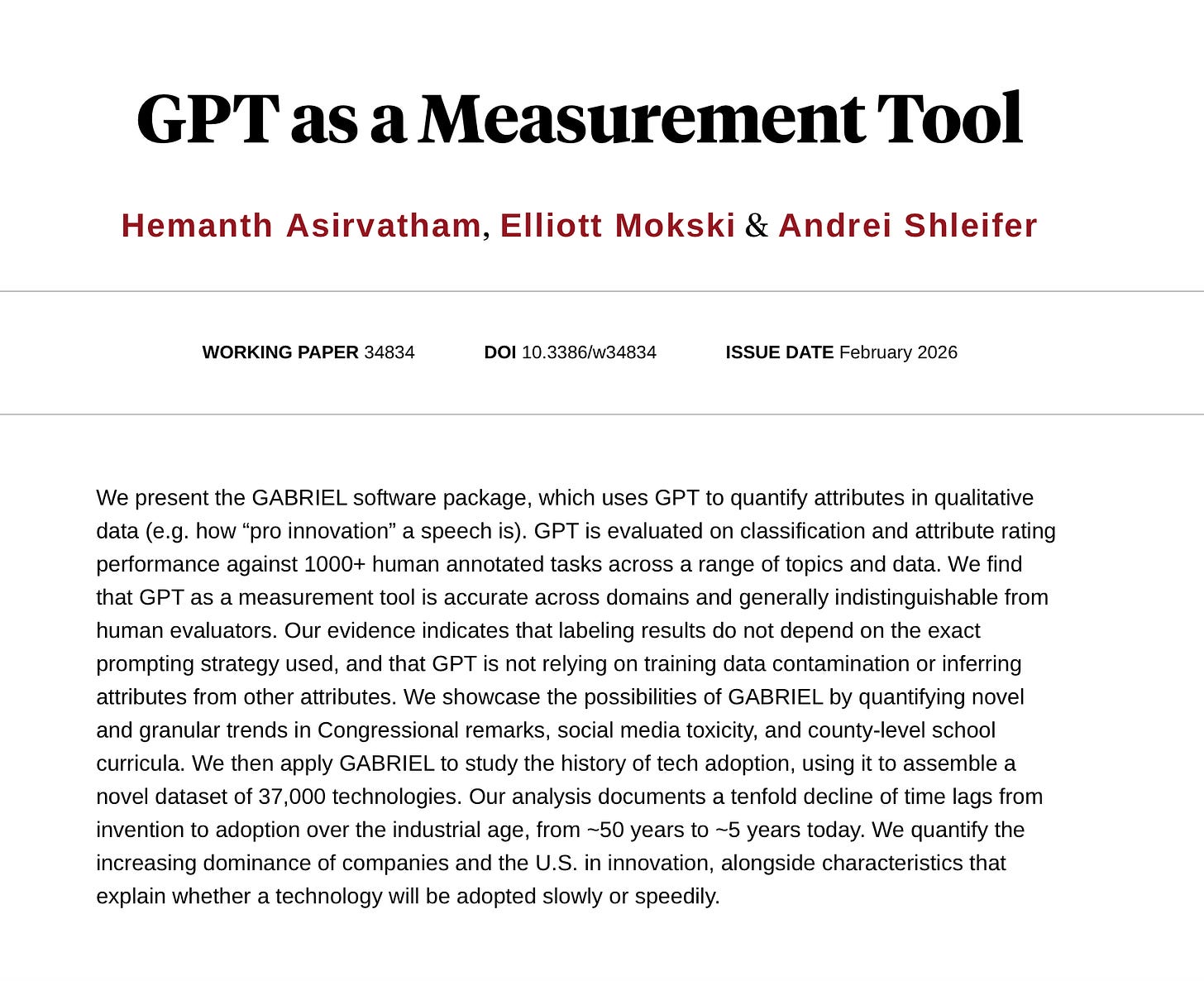

Well, check out this new paper by Asirvatham, Moski and Shleifer. They appear to provide more evidence for this approach of using the one-shot GPT classification methods. Now they’re using some kind of GPT software called GABRIEL, so maybe there’s some gains using GABRIEL over gpt-4o-mini. But put that aside — it’s more evidence that GPT can be used to classify texts for academic research and since I did it for only $11 and in around 2 hours on 300,000 speeches, I think it speaks volumes to how AI is going to democratize research possibilities to under-resources researchers globally. But not only that — I bet it cost the Card, et al. team upwards of $10,000+ for 7 students to label 7500 speeches. And for what? To assign a -1, 0 or +1 to a speech. Well, just wait until you see what I’ve been working on with a major project I’m just about ready to release. Then you’ll see we badly need the marginal costs of classification to fall so that actually meaningful classifications can happen. LLMs are speed readers with perfect memory and impeccable analytical skills. And as David Autor has said, they can extract the tacit knowledge in human communication — things that are quite hard for anyone to pull out or explain. So this is just another public service announcement that Claude Code can help you level up to the place where you’re processing these texts in scale, and I think we are going to probably see that coming very soon, if it’s not already here now. Assume it’s here now actually.

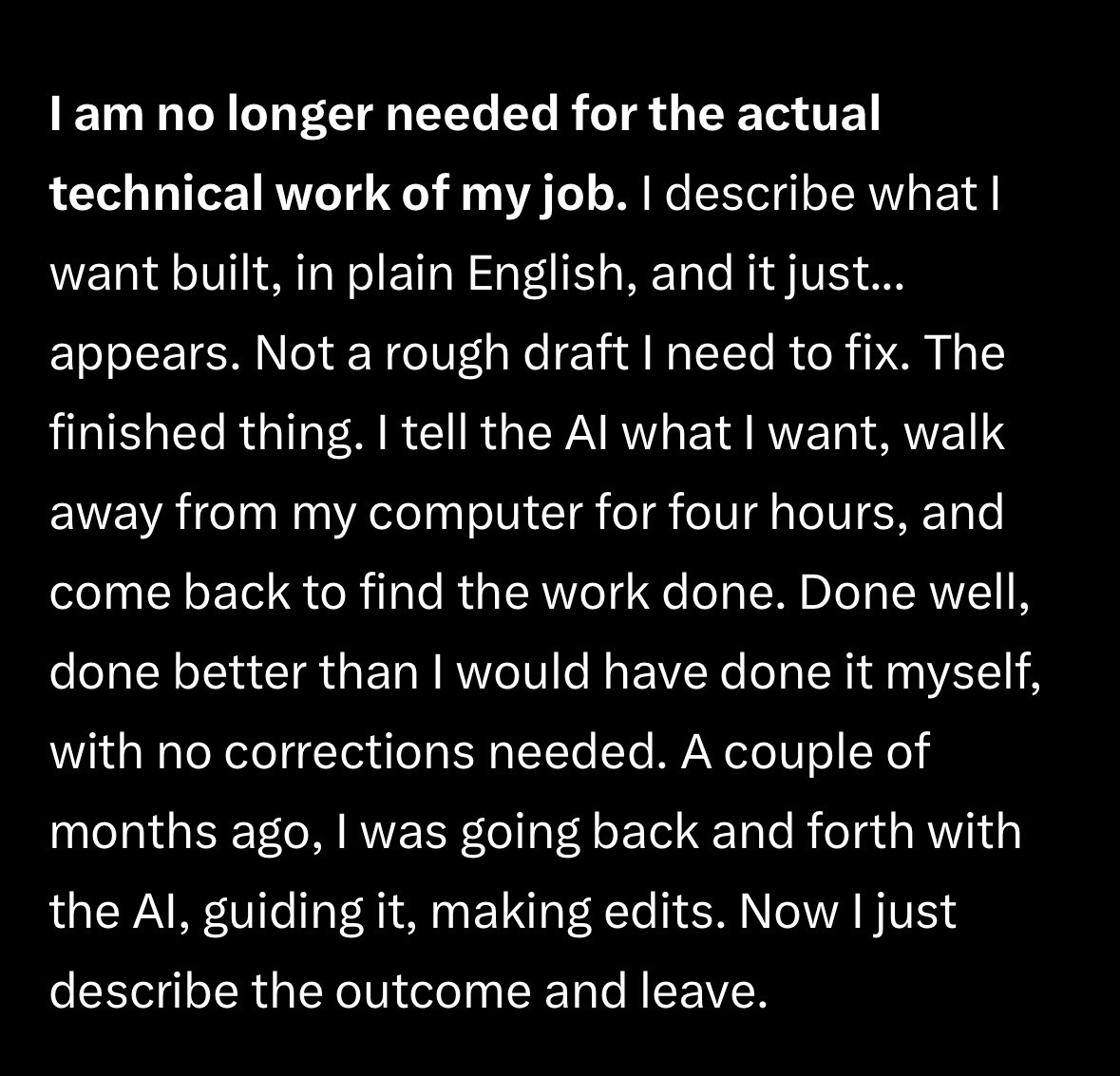

New York Times opinion piece reports on Claude Code saying it and agents like it was the AI disruption we’ve been told was coming. This essay on Twitter went viral and I suspect the errors are correlated with both coming out at the same time.

One paragraph from it.

This is weirdly immediately gripping. It’s an animated history of the Tower of London going back to 50 AD when there was no tower there best I could tell.

I’ve decided to believe that this thread happened not through an LLM, but good old fashioned detective work aimed at absolutely pointless and yet valuable tasks.

Dario Amodei, CEO of Anthropic, has a long and serious essay from January 2026 on “powerful AI” which may be nearly here and which has a decent amount of bad stuff in a dense stack of probability at the tail.

Here’s Jim Heckman at Texas Tech this week. Wreck ‘em! Pew pew! (HT LinkedIn)

Not sure what this YouTube should be about except that it’s a Bloomberg podcast about Claude Code from a month ago. So even journalists are finding Claude code and being blow away.

Ethan Mollick had I think either Claude code or ChatGPT make a series of PDFs containing all the weights for GPT-1, which are presented beautifully here.

Ethan also says maybe think twice before using someone else’s /skill.

Boris Cherny, creator of Claude Code, thinks computer engineers as a job title and a human role is not going to be here after this year. Note it isn’t that the humans are gone but rather the tasks they did will now be automated. And that’s coding.

Here’s a large cushion for sitting on the floor.

University of Western Australia is hosting its annual Labour Econometrics. Keynote speakers are Muriel Niederle and Orley Ashenfelter. The Key dates (AWST) are:

Paper submissions open, Registrations open: Tuesday, 17 February 2026

Submission deadline: Friday, 10 April 2026

Notification of outcomes: Friday, 24 April 2026

Registrations close: Thursday, 9 July 2026

Workshop dates: Thursday, 16 July - Friday, 17 July 2026

There’s three things contemporary AI cannot do that humans can do and that is in the area of long term planning, consistency and learn consistently. (Some humans anyway).

Marriage and the intergenerational mobility of women.

AI dating apps will be a matchmaker, but they won’t use swiping. I’ll be curious to see what this next stage of relationship markets becomes.

Success depends on developing skills of tolerating uncertainty and the unknown.

Microsoft AI CEO says most white collar jobs will be automated within 18 months.

Taylor and Travis are getting married at the Ocean House, a breathtaking hotel on the coast of Rhode Island, and there is a penthouse there that can be yours for only $20m! I continue to dream of living on the coast of Rhode Island.

The great Tom Hagen, aka Robert Duvall, has passed away at 95. One of the greatest actors in Hollywood history.

If you’re ever in Waco texas, check out Kitok‘s, a 50yo institution in the city with amazing burgers and fries. But first check if it’s open.

People who lived into their 80s followed these 8 things. (I love these numbered articles).

Gen Z prefers a good night sleep to sex.

Is gettyimages the best photo website ever?

Spotify developers are no longer coding — they haven’t written new code in a month. They have AI agents doing it instead. Rather they have moved to supervisory roles only. That’s btw a partial equilibrium not a general equilibrium.

The crucial elements of meaning and purpose are to do with staying true to what matters most to you. And the second you stop doing that, that’s when everything becomes hollow. Have Claude read this, and then ask him to interview you one question at a time until he can articulate what your thing is. Mine was my curiosity.

John Lennon’s piano at Christie’s for around half a million.

AI video of Brad Pitt fighting Tom Cruise.

During the federal shut down, squatters and illegal BASE jumpers showed up at Yosemite National Park. Saw some of that on Untamed last night, which I highly recommend on Netflix. Here’s a story of a guy who overstayed his welcome at the park.

The story of the discovering data fabrication by an outside forensic audit of a high profile publication predates the Gino case and Data Colada. A decade ago, it was the LaCour (2014) study which was discovered to have been fabricated data, discovered by then grad students Brookman and Kalla, with Peter Aronow, published as a working paper titled “Irregularities in LaCour (2014)”. That was the first time I had ever seen R Markdown. I have the students replicating this forensic exercise, the original LaCour and Green Science, as well as the follow up RCT by Brookman and Kalla (2016) in Science. These are my slides.

I first learned about Peter Aronow through that “irregularities” paper and now use his book with Miller, Foundations of Agnostic Statistics, for my Gov 2001 class. Thursday I taught all three papers, and students have a homework assignment to replicate all of them for Gov 51. You can find the papers, slides and so on here.

Speaking of foundational statistics. I was reminded of this book the other day while prepping my probability class. Not sure what popped it into my mind. It’s by Leonard Savage and it was a break through in modern statistics. The Foundations of Statistics. The book was considered one of the most important in the Bayesian statistics project ever.

I’m thinking of having a small dinner party here at the apt serving brisket tacos, white queso and chips, salsa, maybe guacamole and margaritas. Here’s a 32 oz mason jar for when you have friends over and want something different. But maybe these 16 oz colored ones are more your speed? Or perhaps these more traditional heavy base glasses are better. This is the Dutch Oven I got for braising the brisket. I decided not to get the top of the line Le Creuset one because I figured why do that when I’ll rarely use this again, but that’s the one we had. I am debating having door prizes (I might have gone overboard). Guests may leave with Texas Blue Bonnet seeds, some De la Rosa Mazapan peanut candy, and maybe these magnets of Texas. I wanted my new friends to have a little bit of my world.

I’m going to make grilled cheese sandwiches one night and decided to get this unnecessarily heavy 3.5 pound stainless steel burger smasher to do it. It’s like a gigantic slab of metal and I love it.

Don’t forget about the coming deadline for papers at the Journal of Economic Psychology on Human-AI interactions. You still have time! Note they are looking for empirical papers, experimental and quasi-experimental. It’s a narrow topic, but you may still have the right stuff.

OpenAI also has a piece on how to leverage Codex better in this AI-driven research world that I think is useful.

More on aphanasia, and what’s missing and how maybe it works. Ask Claude to interview you one question at a time using real survey questions and then announce at the end if you have aphanasia and if so what does it mean? Just make sure you say one question at a time.

And that’s about it! Have a great weekend. Stay hydrated and don’t get sick!

Honestly, seeing my piece pop up in your tabs made my morning. But the part that got me thinking was your Congressional speech analysis. That's exactly the kind of use case most people overlook. Everyone's building chatbots while the real power is in letting these tools chew through datasets that would take a human researcher weeks.

Your point about democratizing research hits hard. I've seen firsthand how a solo developer with the right tools can now do work that used to require a team. Not perfectly, not every time. But the gap is closing fast.

One thing I'd push back on slightly: "Anthropic loyalist" is generous. I just happened to find real, measurable differences when I actually tested the tools side by side. Plenty of failures along the way too. That's what makes the comparison honest.

Thanks, Scott, for another great mix. In particular, I think the point you made about Ethan Mollock's observation not to use other people's skill files in Claude is a great one. In many instances, there's just no need either. You can have, as you know, just a dialogue with Claude Code to help you determine the skills that you need.

One thing that I've been doing recently is getting Claude to read its own documentation manuals and make sure that it's up to date with using all of the various features in a way that improves both of our workflows. Thanks again for the great read, and have a wonderful weekend when it comes.